|

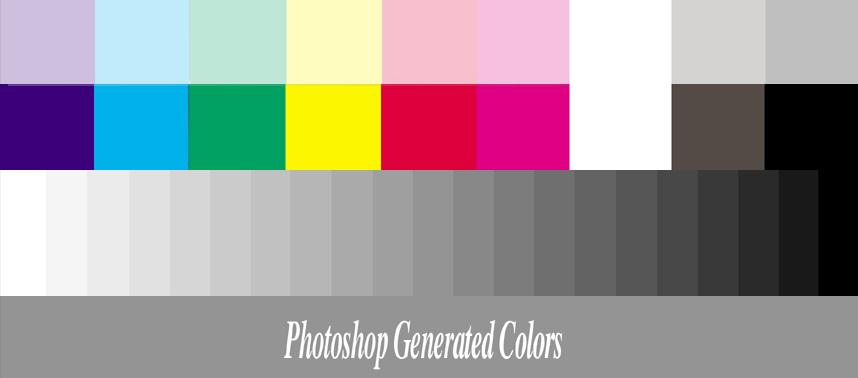

suppose we can safely assume the lighting conditions of an environment. If we can control our image capturing environment as much as possible, the easier it will be to write code to analyze and process these images captured from the controlled environment. It’s far easier to write code for images captured in controlled conditions than in dynamic conditions with no guarantees. As I stated in my previous tutorial on Detecting low contrast images: That creates a bit of a problem if we seek to normalize our image processing environment. However, our human color perception system is affected by the color cast of the rest of the photo (i.e., applying a warm red filter on top of it). Looking at this card, it seems that the pink shade (second from the left) is substantially stronger than the pink shade on the bottom - but as it turns out, they are the same color!īoth these cards have the same RGB values. Both of these cards have the same RGB values, but our perception is impacted by the photo’s color cast ( image source). In the upper image, the card appears to be a stronger shade of pink versus the lower photo, where the pink is more subdued. To learn how to perform basic color correction with OpenCV, just keep reading.įigure 2: In the top and bottom photos, examine the second card from the left (i.e., the pink one). Histogram matching with OpenCV, scikit-image, and Pythonīy the end of the guide, you will understand the fundamentals of how color correction cards can be used in conjunction with histogram matching to build a basic color corrector, regardless of the illumination conditions under which an image was captured.OpenCV Histogram Equalization and Adaptive Histogram Equalization (CLAHE).Detecting ArUco markers with OpenCV and Python.In this tutorial, we’ll build a color correction system with OpenCV by putting together all the pieces we’ve learned from previous tutorials on: Apply histogram matching from the color card to another image, thereby attempting to achieve color constancy.Compute the histogram of the card, which contains gradated colors of varying colors, hues, shades, blacks, whites, and grays.Detect the color correction card in an input image.Save the cropped RGB and L and discard the temporary LRGB.Īt this point, I have cropped and registered L & RGB frames to do further work on, that I can (much) later combine with the LRGB Combine tool.Using a color correction/color constancy card, we can:

Drag the new instance icon from the Dynamic Crop onto each of the L and RGB frames.ħ. Open a Dynamic Crop tool, and draw a box in the temporary LRGB frame that encompasses only fully covered areas.Ħ. This shows the limits of the combined stack where there are parts of the edges not covered by all subs due to misalignment.ĥ. With RGB and L frames open, temporarily create a combined LRGB frame. Combine of the RGB, keeping L separate.Ĥ.

Integrate each of the separate stacks of channel subs to get my R, G, B & L frames.ģ. Since my RGB subs are binned 2x2, aligning them using a 1x1 binned luminance reference also takes care of the interpolation at the same time.Ģ. Choose the best of the luminance subs, and use that as the alignment reference in the Star Align tool for all subs of all channels.

There's multiple different ways to get there, but here is the process that I use:ġ. Other people will no doubt have opinions on this, but I think the channel match tool is probably not the right thing to fix this, rather you need to look at your alignment process, as John says.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed